The Mobilize Center’s software efforts center around two Technology Research and Development (TR&D) projects.

TR&D Project 1

Biomechanics via Wearable Sensors

Our technology pushes the bounds of what we can measure via wearable sensors. Focusing on biomechanical quantities beyond steps, we are creating validated models that enable researchers to explore novel uses of wearable sensors. Our efforts draw on our team’s expertise in both biomechanical and machine-learning approaches.

OpenSim

Software platform with both an API and a GUI for musculoskeletal modeling and simulation

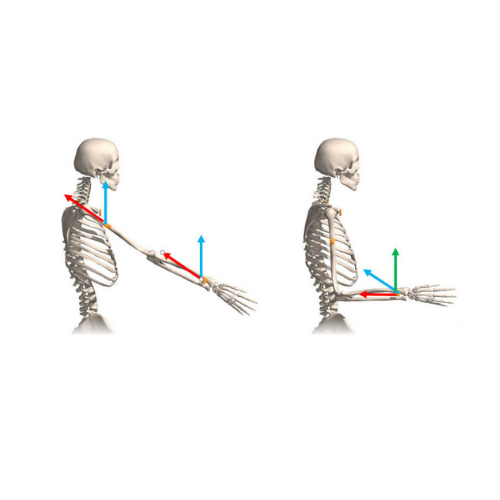

OpenSense

Software tool for analyzing movement with inertial measurement units (IMUs)

OpenSim Moco

Software tool for solving musculoskeletal optimization problems

AddBiomechanics

Software for computing inverse kinematics and dynamics automatically from motion capture files

Metabolic Expenditure Estimates

Software for estimating energy expenditure during movement using two leg-worn inertial measurement units (IMUs)

TR&D Project 2

Machine Learning for Mobility Data

To fill the need for tools that analyze data about movement and rehabilitation, we are developing machine-learning models to analyze and generate insights from unstructured, high-dimensional data, including time-series (e.g., from mobile sensors), images (e.g., MRI), and video (e.g., smartphone video of a patient’s gait).

OpenCap

2D video-based technology to record motion and estimate musculoskeletal forces using smartphones

Sit2Stand

Software for computing sit-to-stand biomechanics using a mobile phone

DeepFOG

Software for detecting freezing of gait using inertial measurement units (IMUs)

DOSMA

A platform for developing deep-learning models from unstructured data, like text and images

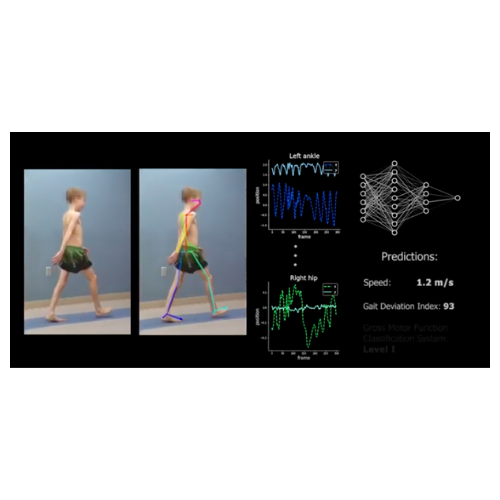

CP GaitLab

Software for gait analysis from 2D video, such as captured by smartphones

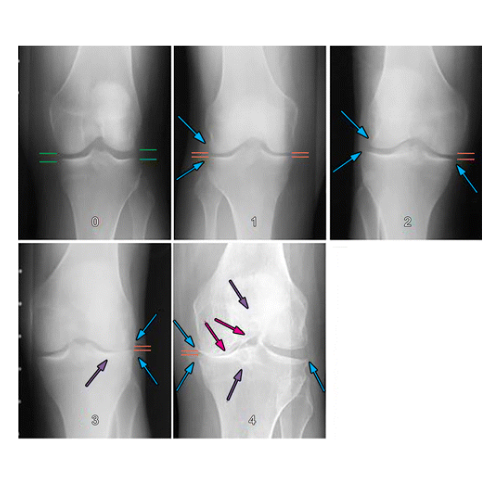

KneeNet

Machine-learning models to automatically compute meaningful quantities for knee osteoarthritis from medical images

Comp2Comp

Software for rapid, automated body composition analysis of computed tomography scans

Snorkel

A platform for developing deep-learning models from unstructured data, like text and images